Current Vantage Point

I’ve been working on a human-centered design team inside an innovative IT department during the current surge of LLM-powered tools. From that vantage point, I’ve seen how enterprise AI adoption actually unfolds – the initial hype, the legal and security reviews, the pilot experiments, the skepticism, and the gradual realization of value when the technology genuinely fits.

Spending the past 7 years in a largely “buy over build” environment has sharpened my perspective. In enterprise contexts, success depends on workflow compatibility, vendor integration, stakeholder alignment, and realistic expectations.

Defining & Designing AI-Enabled Systems

At Mad*Pow, I worked on healthcare products that incorporated automation and machine learning, including clinical recommender systems and remote patient monitoring platforms.

More recently at the Gates Foundation, I’ve facilitated enterprise AI prioritization workshops and contributed to the design and evaluation of an internal travel chatbot.

Across these efforts, my focus has been on how AI-enabled systems intersect with real workflows:

- How AI recommendations, confidence levels, and source material are presented transparently

- How humans review and override recommendations based on expert judgment

- How conversation and handoffs between AI and humans flow, feel, and sound

- How to pilot and evaluate probabilistic systems before broader rollout

I’m particularly interested in building AI-enabled products where trust, usability, and accountability matter as much as speed and technical capability.

AI Project Highlights

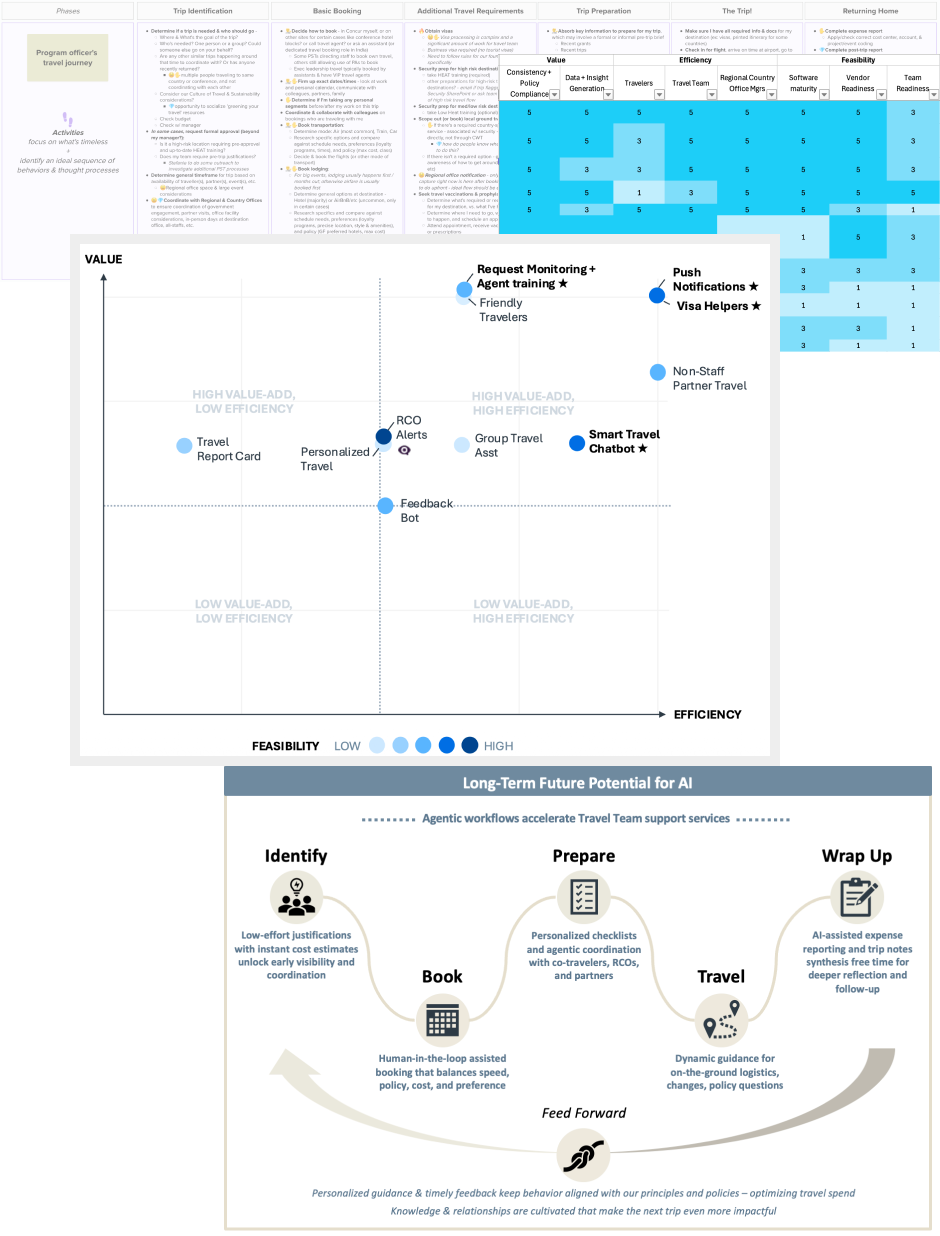

Gates Foundation – AI Opportunity Prioritization

When senior leadership directed the organization to explore AI's potential for process transformation, the foundation's CIO called me in to help. Two high-impact functions – Travel and HR Talent Acquisition – were identified as initial focus areas.

Partnered with a program manager from the COO's office, first we quickly got up to speed on where each team was coming from, and meeting them where they were at.

I led rapid journey mapping workshops with each team to map current end-to-end experiences, surfacing pain points and AI opportunity areas. We captured 38 rough ideas across both teams.

There were more ideas than the teams had capacity to pursue, so we developed a structured evaluation rubric to score ideas for potential efficiency gains, business value impact, user benefit, and feasibility. Working sessions with each team yielded a prioritized landscape of opportunities and a leadership-ready narrative outlining tradeoffs, sequencing, and realistic next steps.

Ultimately, four pilot concepts moved forward. Equally important were the ideas that did not – they're not off the table, just "not yet." In addition to finite staff capacity, these teams are deeply dependent on vendors like Workday and Concur. Waiting for native AI capabilities can sometimes be more strategic than building custom solutions prematurely. This experience reinforced that in enterprise AI adoption, timing and integration constraints are often more important than creativity. For internal efficiency goals without competitive pressure, sometimes the right AI strategy is patience.

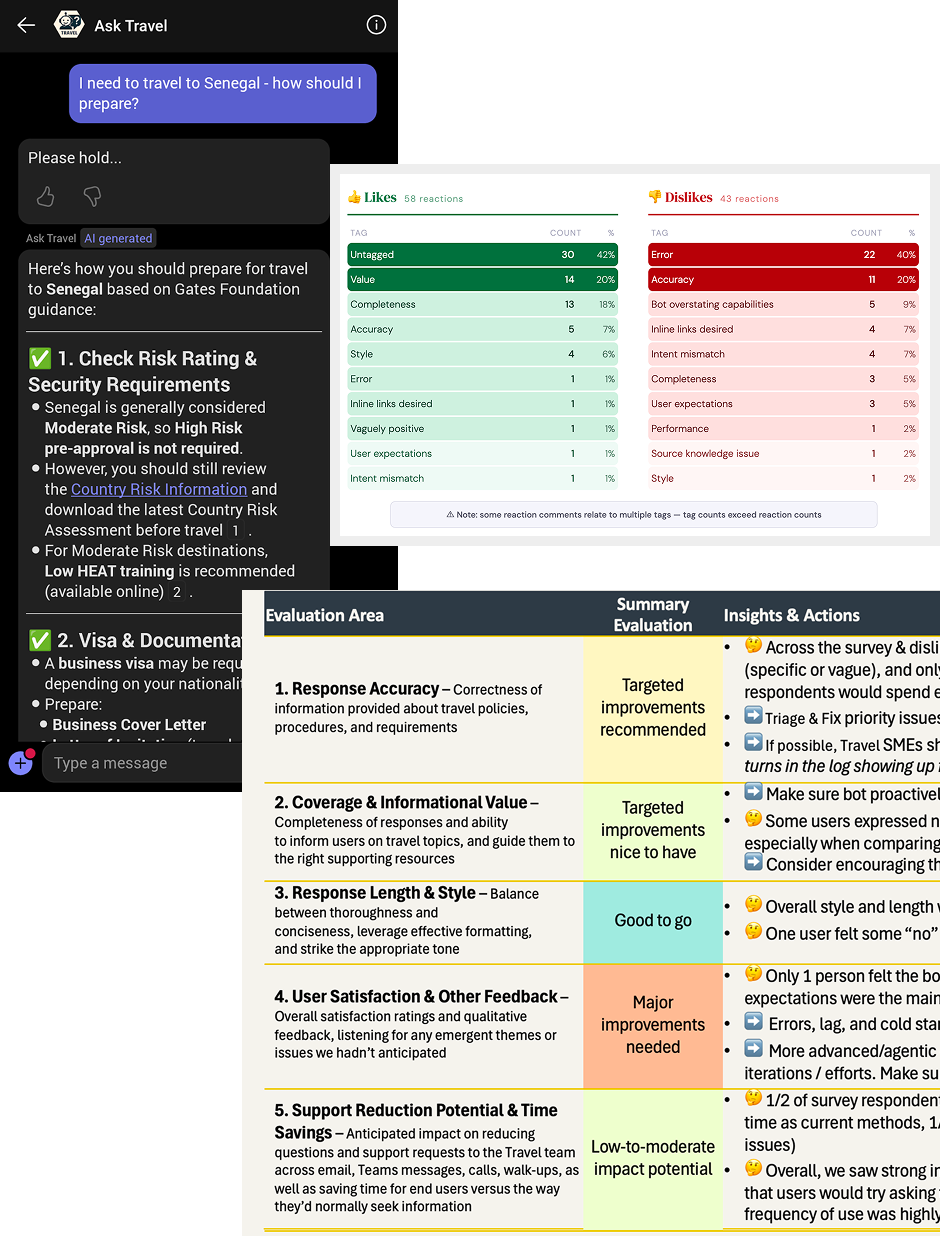

Gates Foundation – "Ask Travel" Chatbot

The Gates Foundation’s global travel program is genuinely complex. Staff travel internationally under varying visa requirements and security constraints, and inaccurate guidance can have real human and operational consequences.

When the Travel team and IT developed an MVP chatbot in Microsoft Copilot Studio to answer policy, visa, logistics, and security questions, the central question was not just whether it “worked” but whether it was reliable enough for organization-wide rollout. I designed and led a multi-method pilot to assess readiness for scale. That included:

- 1:1 usability sessions

- Live group drop-in testing

- Asynchronous testing

- Exit survey

I deliberately recruited staff travelers and travel arrangers to participate rather than relying solely on SME testing. Everyday users phrase questions differently than experts, and in a probabilistic generative AI system, phrasing variation can produce materially different outcomes.

Across 21 participants, 11 survey responses, and 100+ thumbs-up/down feedback comments, I surfaced and helped triage ~22 issues in collaboration with technical and travel stakeholders.

I worked with the IT team to identify source content issues that were impacting response accuracy, and to address disruptive errors and lag with lightweight solutions grounded in Nielsen's heuristics. I also helped refine the bot's tone and standard messaging to ensure it clearly communicated its scope and limitations to new users.

After resolving the the most critical issues, the chatbot is launching to all staff in March 2026. The evaluation framework I developed for perceived accuracy, completeness, usability, and trust is now a reusable resource for the several other internal chatbot projects underway at the Foundation.

Mad*Pow Project – IBM Watson for WellPoint

Early in my career at Mad*Pow, I designed one of the first human-in-the-loop interfaces for a clinical AI recommender system built for a major health insurer and claims processor, powered by IBM's Watson AI.

The system analyzed patient records against clinical guidelines, medical research, utilization policies, and cost data to generate ranked treatment recommendations with associated confidence levels and cited evidence.

I designed workflows for two very different users: in-network oncologists exploring treatment options for their patients, and utilization management staff reviewing pre-authorization requests for medical necessity. That included:

- how recommendations, confidence scores, and source material were presented

- how users could accept, question, or override AI suggestions – informing model improvement over time

- how cases could be escalated – such as an oncologist appealing an automated pre-authorization decision, or a utilization management nurse escalating to a staff oncologist

What began as a 2-week prototyping engagement extended to a year-long collaboration based on client satisfaction with my work.

Watson's broader healthcare trajectory was more complicated – issues with the underlying technology and synthetic training data led to questionable recommendations and results that didn't consistently live up to the hype.

Pre-authorization and evidence-based decision-making remain challenges in the industry. I'm optimistic about what modern AI can bring to them, and grounded in what it requires: accuracy, transparency, and safety have to be non-negotiable when people's lives depend on the output. I'd work on this problem space again in a heartbeat.

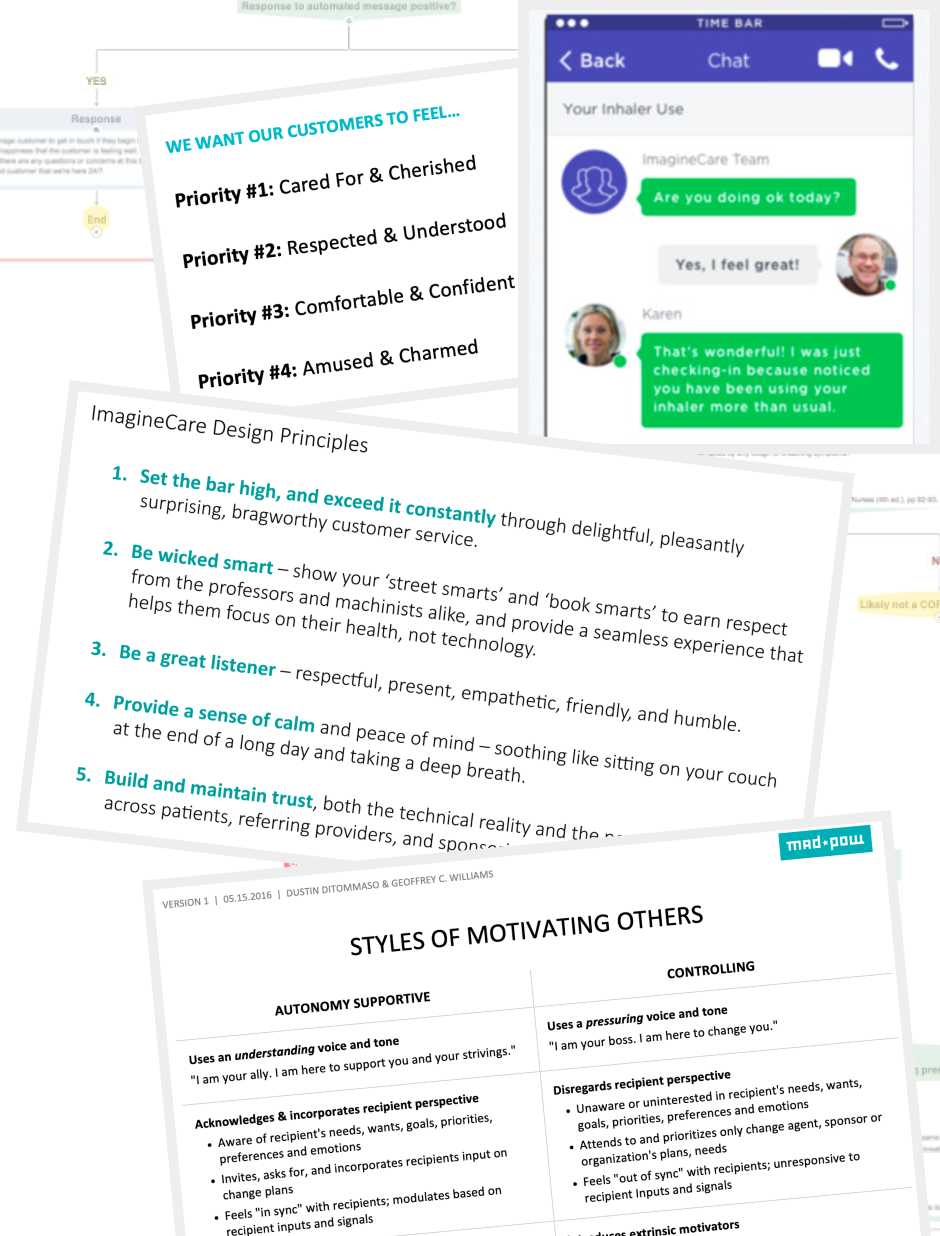

Mad*Pow Project – ImagineCare

ImagineCare was a remote patient monitoring service built in collaboration with clinicians at Dartmouth-Hitchcock Medical Center. The platform blended human care team support with machine-learning-powered automation to help patients manage chronic conditions at home and on the go. Sensor data and mobile inputs were processed through clinical and personalization algorithms – flagging anomalies and triggering either automated outreach or human care team intervention.

My work defined the interaction model between automated systems and clinicians – including bot-to-human handoffs, queuing triage actions for human staff, and overall tone and style of the system. The bot's tone was influenced by design principle workshops I facilitated with the clinical product team, as well as a behavior change design workshop facilitated by my colleague and mentor Dustin DiTommaso.

My user research revealed that messages with a human name and photo felt more motivating. However, we made a deliberate design decision not to disguise automated messages as human. We instead used a clearly labeled “ImagineCare Team” identity for automated outreach, preserving authenticity and trust even at the potential cost of engagement.

The result was a system that balanced automation and human care responsibly – achieving 4x the national benchmark for wellness program engagement and measurable reductions in hospital admissions and emergency room costs.

Read the full ImagineCare case study or explore other case studies.